The Site That Optimizes Itself.

How we built a dual-MCP SEO and AEO engine that runs keyword research, identifies content opportunities, and commissions updates autonomously — compounding site authority without a human operator in the loop.

The Problem

SEO and AEO work is slow, manual, and easy to drop. Keyword research, content decay analysis, striking-distance opportunity identification, on-page fixes, and Answer Engine Optimization updates all compete for time that never shows up consistently.

SEO compounds. Every week without optimization is a week of compounding authority the site doesn't earn. A human-dependent process that runs inconsistently produces inconsistent results — which is indistinguishable from no process at all.

What We Built

We built a dual-MCP engine that exposes DataForSEO (12 tools: ranked keywords, SERP analysis, keyword data, competitor research, on-page audits) and Google Search Console (9 tools: striking-distance opportunities, content decay detection, cannibalization analysis, low-CTR page identification) as agent-callable capabilities.

A recurring Claude task runs on a weekly schedule, ingests the tool outputs, identifies the highest-leverage opportunities across keyword gaps, decaying content, and on-page issues, and either commissions content updates directly or posts a prioritized action brief to Slack.

“SEO work is easy to understand and easy to defer. The engine removes the deferral option — it runs whether or not anyone has time for it that week.”

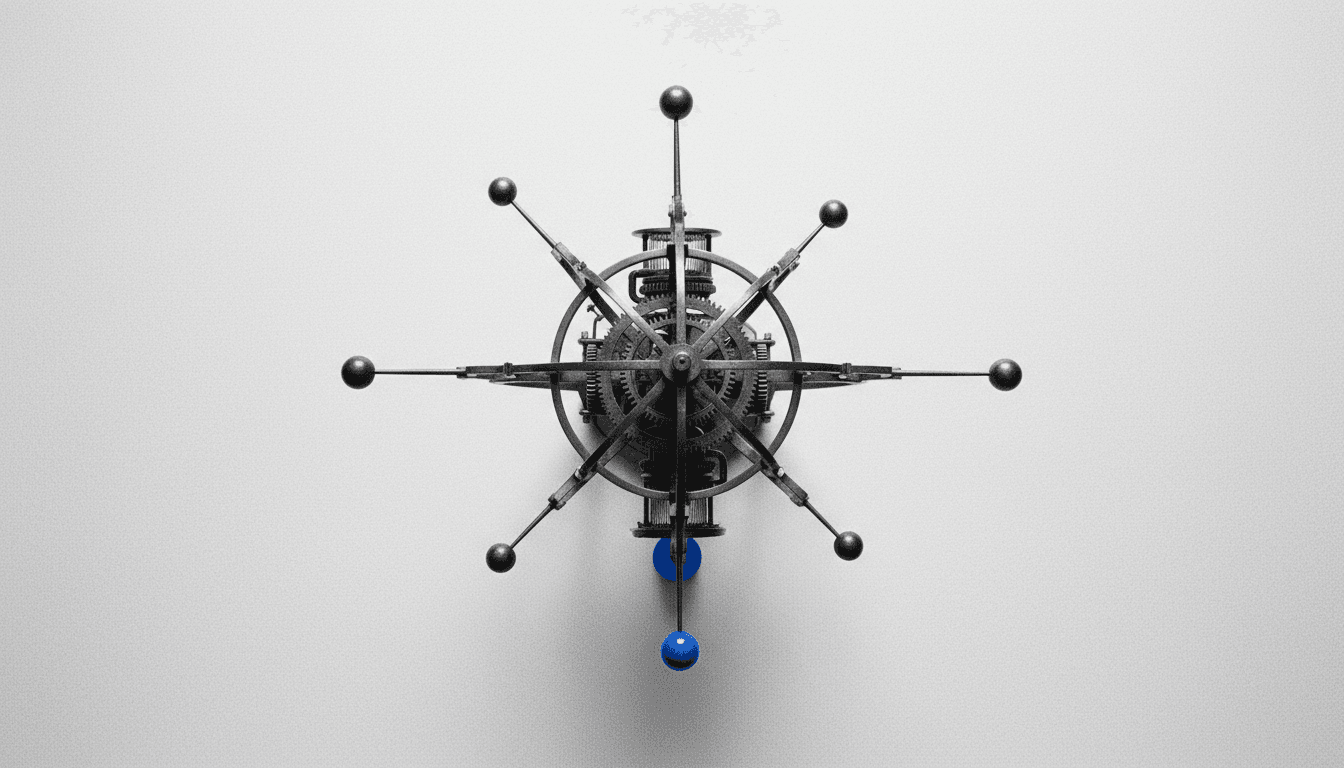

The System Architecture

DataForSEO MCP connector exposing 12 tools. Google Search Console MCP exposing 9 tools. Recurring Claude task on weekly schedule. Outputs: direct content updates via Automaton CMS MCP and prioritized Slack brief with action items ranked by estimated impact.

The Results

A self-directing growth engine that runs every week regardless of workload. Striking-distance keywords get targeted content updates before they slip further down the SERP. Decaying content gets refreshed before it loses the rankings it took months to earn.

Every week the engine runs, the site gets marginally better. Every week manual SEO gets skipped, the site gets marginally worse. The engine removes the skip option.